The 74% Problem: Why Most Companies Are Getting AI Wrong

I’ve seen this before…

The pattern. The rush, the excitement, the scramble to adopt something new before anyone has stopped to ask what problem it actually solves.

The first twenty years of my career were in print. Fifteen of those were at one of the biggest publishing companies in Europe, working across some of the biggest news brands in the UK. I was there throughout the digital revolution from the early 2000’s. Back in 2010, I helped launch the iPad edition of The Times of London.

And what I watched happen in publishing was instructive. The organisations that failed weren’t the ones that ignored digital. They were the ones who adopted digital tools without rethinking their model. They had a website. They launched an app. They moved content online. But they didn’t ask the harder questions.

What is our content strategy for a digital audience? What’s our revenue model and how should it change? What relationship do we want with readers who behave differently online and consume content in a completely different way?

The tools arrived before the thinking. Tragically, some of those organisations are gone now, or are shadows of what they once were.

I see the same thing happening with AI. Only this time, the stakes are higher. When AI disrupts, it disrupts everything simultaneously. Every industry, every function, every workflow. The risk of getting the sequence wrong is greater. But the opportunity for those who get it right is bigger. I’m not pessimistic about AI. Quite the opposite. I’m incredibly optimistic.

I believe the organisations that will benefit most from AI aren’t necessarily the fastest to adopt. They’re the ones willing to do the thinking first.

The problem is the problem

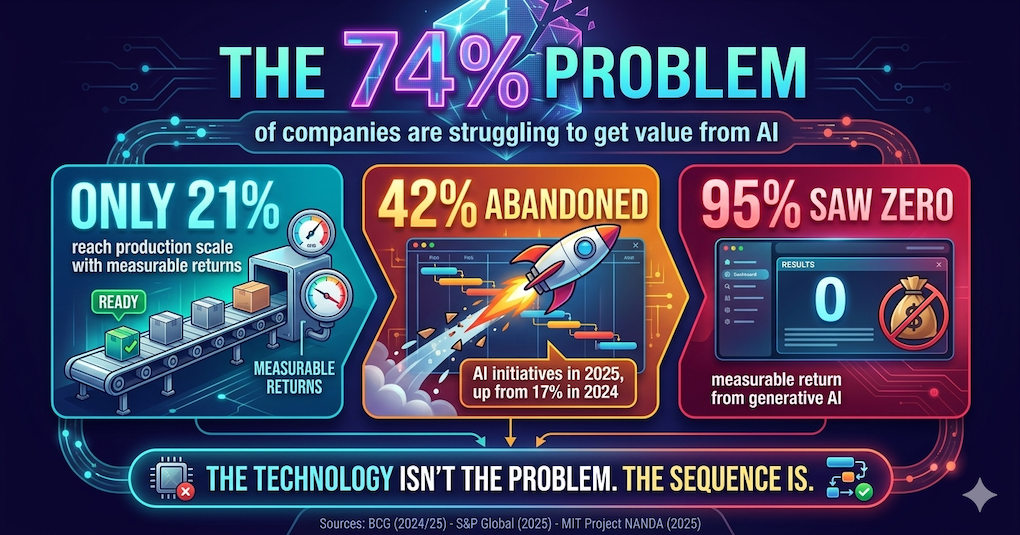

Here’s a number that should make every business leader feel a little uncomfortable.

74% of companies are struggling to get value from AI. Not because the technology doesn’t work, but because they skipped the strategy. Only 21% have developed the capabilities to move beyond proof-of-concept and into production at scale.

That’s not a technology problem. That’s a planning, strategy and humanity problem.

Think about it this way. Imagine spending $50,000 on a beautiful new kitchen. Top-of-the-range appliances, integrated smart tech, the lot. Only to realise nobody in the house can cook. That’s what most organisations are doing with AI right now.

And the data backs this up across several dimensions.

-

88% of organisations use AI in at least one function, but nearly two-thirds remain stuck in experiment or pilot mode.

-

Last year, 42% of companies abandoned most of their AI initiatives, up from 17% in 2024.

Did the tools get worse? No. The tools got better.

What changed? Organisations rushed in without doing the strategy work first.

Tool-first versus problem-first

This is the fundamental distinction that most companies are getting wrong.

Tool-first thinking asks, “What can this technology do?” and searches for places to apply it. Problem-first thinking asks, “What problems cost us the most?” and evaluates whether technology can help.

AI without strategy is like having the world’s best SatNav but no destination. It will guide you beautifully, avoiding roadworks and traffic jams. But if you don’t know where you’re going, all you’ve done is get lost more efficiently.

We are a society with an adoption addiction. We're incredibly good at buying things and incredibly bad at knowing why we bought them. The stack of unread books on my bookshelf is proof enough of that. Aspiration and ambition outpace implementation and reality.

And this is not a new pattern. I’ve observed a repeatable trend over the years in tech.

-

ERP implementations, the big SAP and Oracle rollouts, failed or underdelivered at a rate of 50-75%.

-

CRM platforms like Salesforce and Dynamics failed at 55-70%.

-

Cloud migrations stalled for about half the organisations that attempted them.

-

Marketing automation platforms challenged 73% of the businesses that deployed them.

-

And AI right now is tracking at 74-85% underdelivery.

Different decades. Completely different technologies. Remarkably similar failure rates.

That’s not a coincidence. That’s a pattern. And what it tells you is that the problem was never the technology. The technology got better every single time. The failure rate didn’t.

The problem is the sequence of operations. Every single wave, organisations led with the tool and tried to retrofit the strategy. Every single wave, it costs them. AI is not different. It is the latest chapter in a story we keep telling ourselves, but seem strangely unable to learn from.

The 70% nobody talks about

Boston Consulting Group’s research frames this more precisely than I ever could. Their 70-20-10 rule states that:

-

70% of AI implementation problems are the result of people and processes

-

20% due to the underlying business technology

-

only 10% due to the AI itself

Reframe that: for every $1 you spend on AI technology, you should be spending $7 on people and processes.

Most budgets I see are the exact opposite.

MIT’s Project NANDA found in their 2025 “GenAI Divide” study that 95% of organisations deploying generative AI saw zero measurable return. Not low return. Zero. And the failure was almost never the model. It was data readiness, workflow integration and the absence of a defined outcome before the build started.

What companies are missing is the fact that implementing AI is a significant change management exercise. It affects your people, your systems and your processes. The reason AI adoption is failing is that companies are underestimating the effort, thought, planning and strategy that goes into an effective piece of change management.

Why AI fear is different

For all the hype and excitement, I don’t think we’ve ever witnessed a paradigm shift quite like AI when it comes to resistance.

Traditional tech resistance is about learning curves and workflow disruption. “I don’t know how to use this new system”, or “This changes my daily routine.” Whilst it might be uncomfortable, it is ultimately manageable.

AI resistance is existential. It touches on identity, relevance and survival.

EY’s AI Anxiety in Business survey found that:

-

75% of employees are concerned AI will make certain jobs obsolete.

-

65% are anxious about AI replacing their own role.

The good news? With genuine understanding, training and support, it is possible to move past this. But it starts with empathy.

-

Build with your employees, not for them. Treat your teams as co-designers, not end users.

-

Reframe the narrative from “AI is taking over X” to “AI frees you to focus on X.”

-

Explain how AI does what it does, because transparency kills fear.

-

Start with genuinely useful quick wins that make people’s jobs better, not just cheaper.

Change management is the secret sauce

You could be mistaken for thinking much of this is common sense. But you’d be amazed at how many organisations overlook the complexity of change.

Change management is the single biggest predictor of whether AI initiatives succeed or fail. Not the model. Not the platform. Not the budget. The people.

Prosci’s research, spanning over 25 years of benchmarking data, shows that projects with excellent change management are 7x more likely to meet their objectives. Conversely, organisations lose 60-80% of expected benefits when change management is poor. Globally, an estimated $2.3 trillion is wasted annually on failed digital transformation programmes.

And yet the investment gap is staggering. AI spending is projected to rise 44% in 2026 to $2.5 trillion globally, according to Gartner. Training and change management investment is growing at a fraction of that rate. We’re giving people Ferraris but no driving lessons. That gap is not sustainable. The organisations closing it are the ones pulling ahead. The ones ignoring it are storing up a very expensive problem.

What “strategy-first AI” actually looks like

Before you deploy any AI, ask one question: “What job is this actually trying to do?” If you cannot answer that in one sentence, you are not ready.

Organisations should be able to answer questions across four key areas before going anywhere near technology.

- Business alignment. What specific problems are we solving? How do we measure success? Which use cases have the highest impact-to-effort ratio?

- Data readiness. Is our data clean, sufficient and accessible? Is data lineage traceable? 43% of businesses cite data quality as their number one AI obstacle.

- Governance and compliance. Who owns AI outputs? What regulations apply, from GDPR to the EU AI Act? How do we protect brand IP? Organisations with well-defined governance typically deploy AI 40% faster, not slower.

- Organisational readiness. Is leadership actively sponsoring this? Does the team have the skills, support and desire to change? Are you genuinely ready to support your people to be successful?

There are well-established frameworks out there already. Gartner has their AI maturity model. IBM has their AI ladder. The 5P framework centres around Purpose, People, Process, Platform and Performance. What most of these frameworks have in common is that the majority of organisations start too far down the pipeline at Platform. Or to put it another way, they start building the house with the wallpaper.

At Niteco, we saw this gap firsthand. After listening to our clients, we recognised that businesses were struggling to know where and how to start. So we built our own strategic AAA framework, structured around three phases: Audit, Adopt and Accelerate.

At the Audit level, it delivers an AI readiness scorecard and a 30-day action plan with business-relevant use cases. Think of this as walking.

At the Adopt level, it provides structured discovery, a governance plan and a 6-12 month implementation roadmap with defined success criteria. Think of this as running.

At the Accelerate level, it provides true operational integration, embedding AI into day-to-day operations to drive efficiency and growth. Think of this as flying.

The key distinction? It’s built around the client’s specific business workflows, not around the technology. The technology comes third. People and strategy come first.

The role of Opal

Opal is the intelligence layer running through everything: CMS, CMP, Experimentation, Commerce, and your Data Platform.

It's an agent orchestration platform and that makes it a meaningful distinction.

You can build agents, chain them together, and set them running autonomously on a schedule.

One workflow can handle ideation, planning, variant creation and results summarisation, with or without anyone pressing a button in between.

And critically, it draws on your own data. Your brand guidelines. Your past campaign performance. Your experiment history. That's what separates it from a generic AI tool. It knows your brand deeply.

But before you switch it on, focus on these 4 things:

- First, define your outcomes. Not “we want to use AI more.” What does success look like in 90 days? How many experiments per week? What's your time to publish? If you can't measure it before Opal, you can't prove Opal moved it after.

- Second, brand infrastructure. Opal has an instructions layer and it genuinely transforms the quality of output. Brand voice, tone of voice, content guidelines, target personas. It's the difference between generic content and content that sounds like you.

- Third, clean content architecture. One of the demos I've seen shows Opal working with a landing page, scanning it, checking content types, adding structured metadata for AI discoverability. That only works if what's underneath is solid. Opal can't structure what's already broken.

- Fourth, a specific use case. Not a general instruction to use AI more. One problem. One workflow. Something measurable. Prove value, build confidence, then expand. Perhaps start with experiment planning, perhaps with content adaptation. But start somewhere specific.

Clients could be forgiven for thinking that Opal is primarily a content tool. That's understandable, because a lot of marketing is content-led.

But nearly 60% of all Opal agent usage is in experimentation. That's where it's being used most heavily and where the results are strongest.

Teams using it across the full experimentation lifecycle are running 78% more experiments. Experiments are starting 19% faster. They're reaching statistical significance 25% faster.

What good looks like in practice

Once the strategy is in place, the results can be remarkable.

But here’s the important caveat. None of these work without the strategic groundwork being done first.

I read a quote recently that sums up the danger perfectly: “Agents amplify strategy when it exists and amplify chaos when it doesn’t.”

Organisations that go straight from buying an AI platform to deploying agents won’t just fail to see value. Worse. They’ll encode their lack of strategy into AI systems that then execute bad processes really, really efficiently. Speed in the wrong direction is not progress.

At Niteco, we've built a really cool Automated Experimentation agentic workflow in Opal.

- We use Opal to analyse a webpage

- Then it combines real user behaviour data from GA4, and brings in proven UX research from Baymard.

- It then identifies opportunities and automatically suggests and prioritises experiments.

- It goes a step further by creating those experiments directly in Web Experimentation, building your entire experimentation roadmap for you.

- Outcome – your web manager can do in minutes what would traditionally require a full experimentation team — including a UX researcher, analyst, designer, developer and CRO specialist.

- It’s pretty much an entire CRO team in your pocket!

We've also built an Event Campaign Launch agentic workflow

The work of the marketing, digital and social teams is orchestrated through Opal.

- Creates a landing page or a microsite in your CMS

- Generates images

- Writes the copy

- Creates social posts (and images) to launch the event

- Schedules and posts them directly to the social platforms

- The other cool thing is it connects all those systems, your CMS, (CMP if you have it) your social channels – all together in a simple chat window

The 10 questions to ask before you turn on AI

If you take one thing from this article, take these ten questions. Share them with your team tomorrow. Print them out and pin them to the wall.

If you cannot answer all ten, that should tell you something. It should tell you that you’re not ready yet. And that is absolutely fine. Answering them IS the strategy.

1. What specific business problem are we trying to solve?

Without a defined problem, AI is a solution in search of a use case. 46% of AI proofs-of-concept are discarded.

2. How do we measure success today, and what does “good” look like?

You cannot demonstrate ROI without baselines.

3. Is our data clean, structured and accessible?

43% of businesses cite data quality as their number one AI obstacle.

4. Who owns this initiative and who is accountable?

Two out of five AI projects stall due to ownership ambiguity.

5. Have we defined governance, brand guardrails and compliance rules?

Ungoverned AI creates risk faster than value.

6. Do our teams have the skills, training and desire to change?

Skills gap is the #1 adoption barrier. Businesses are not investing enough in training. Not even close.

7. Are the processes AI will touch already working?

It may sound obvious, but automating a broken process simply produces broken outputs faster. Fix before you automate.

8. Is leadership actively sponsoring, or just approving the budget?

AI high performers are 3x more likely to have active senior leadership engagement.

9. What is our change management plan?

Integrated change management makes projects 47% more likely to succeed.

10. Are we prepared to start small, learn and iterate?

The expectation of immediate enterprise-wide transformation is the enemy of progress.

The sequence matters

Five things to take away.

-

The problem is the problem. Most organisations are focused on the AI, not on what they’re trying to fix. If you can’t define your use case in one sentence with a number in it, you’re not ready. The technology is not the starting point. The problem is.

-

People and process, not technology, determine whether this works. 70% of AI failures are a people and process problem. For every pound you spend on AI technology, you should probably be spending seven on the humans and workflows around it. Build with your people, not for them. That distinction matters more than any framework.

-

AI without a strategy doesn’t fail quietly. It fails efficiently. Agents amplify strategy when it exists and amplify chaos when it doesn’t. The risk is not that AI doesn’t work. The risk is that it works very well in the wrong direction.

-

Change management is the single biggest predictor of success. Not the model. Not the platform. Not the budget. The people. Projects with excellent change management are seven times more likely to meet their objectives. And yet AI spending is rising at many times the rate of training investment. That gap is not sustainable.

-

The organisations that benefit most are not the fastest to adopt. They’re the ones who think first. We’ve seen this pattern before. Different decades, different technologies, remarkably similar failure rates. The technology got better every time. The failure rate didn’t. Why? Because the order of operations never changed.

People first. Strategy second. AI third. Get the sequence right.

This article has been adapted for the Podcast with Patrick series on the Optimizely Academy. You can watch that here

Comments