CI/CD using Episerver DXP Deployment API and multi-stage YAML pipelines - Part 2

Recap

In my previous post, I explored the possibility of using Release Flow as a branching strategy for Episerver DXP deployments.

Moving on to the Deployment workflow, there are already a number of excellent blog posts like this one on how to use the Deployment API with Azure DevOps Classic pipelines.

When I started working with the Deployment API, my goal was to create reusable YAML pipelines that I can use across projects for CI/CD workflows.

Why YAML

Multi-stage pipelines allow for a combined build and release pipeline in one YAML file. The key benefits of using YAML over the Classic pipeline are

- The YAML file is source-controlled so you can treat it like any other file in the repository, to view history, branch, peer review changes.

- It can be easily forked/copied to new projects so you can have standardised pipelines across your projects.

- It provides a unified experience and view of your deployment pipeline.

Show me the YAML

The following YAML pipeline files can be found in the GitHub repository - https://github.com/rrangaiya/epi-dxp-devops.

- Integration - a pipeline to build and deploy to the Integration environment.

- Release - a pipeline to build and deploy a release candidate to Preproduction and Production environments.

Obviously, these reusable pipelines are a guide only based on my experience with Episerver DXP projects. They are based on the Release Flow branching strategy but can be tweaked to suit your project requirements.

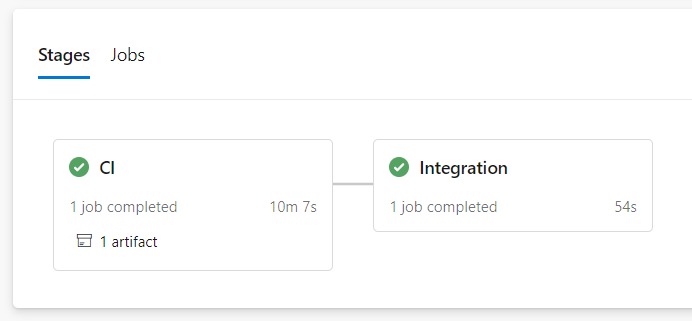

Integration pipeline

This pipeline is set up to run as part of the continuous integration workflow i.e. when code is merged to the master branch. It creates a web package and deploys to the Integration environment using the Azure App Service Deploy task. Deployments done using the Deployment API takes around 30 minutes. As this pipeline will be run frequently, a fast deployment time (~1 min) to Integration ensures developers and testers are not waiting to test deployed features.

- CI stage - builds the solution, runs any unit tests, creates a web package.

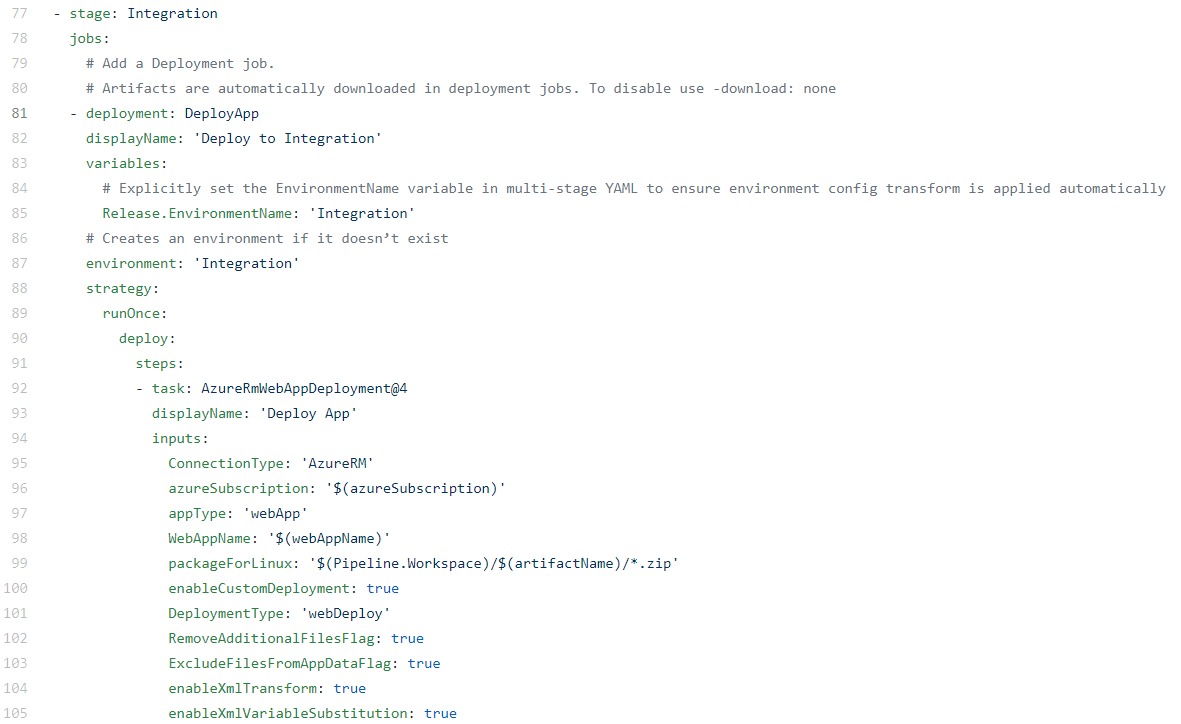

- Integration stage - deploys to Integration. This requires setting up a service connection to your DXP Azure subscription on Azure DevOps. The service principal details required for the connection can be requested from Episerver Managed Services.

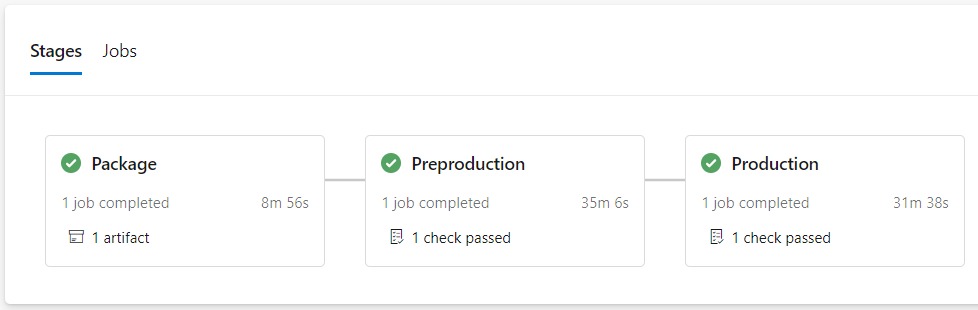

Release pipeline

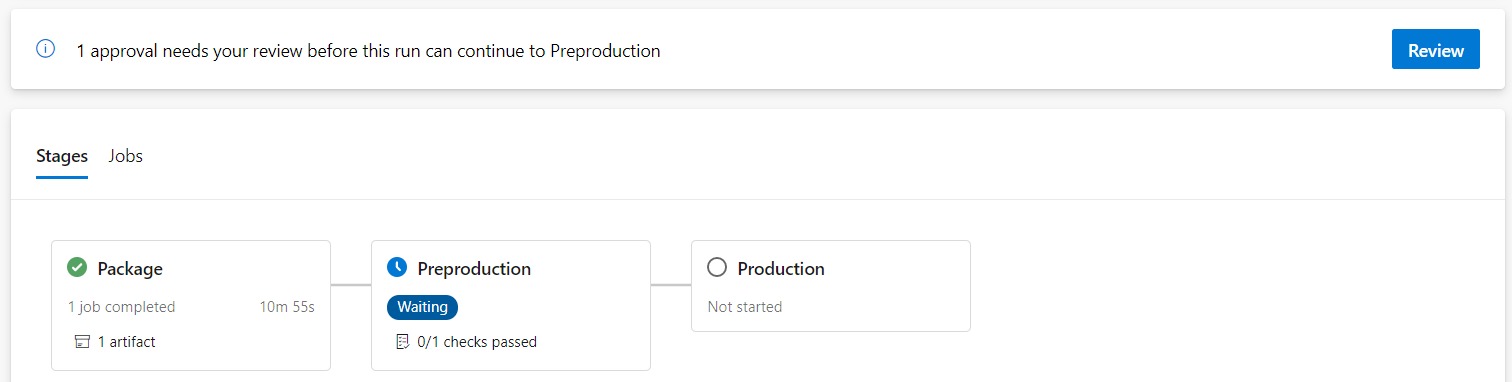

This pipeline is triggered on any change to release/* branch. It follows a "Build once, deploy many" DevOps convention using the Deployment API code package approach.

- Package stage - builds the solution, runs any unit tests, creates a Nuget package, and uploads it to Episerver DXP for deployment.

- Preproduction stage - on approval, deploys the package to the Preproduction environment.

- Production stage - on approval, deploys the package to the Production environment.

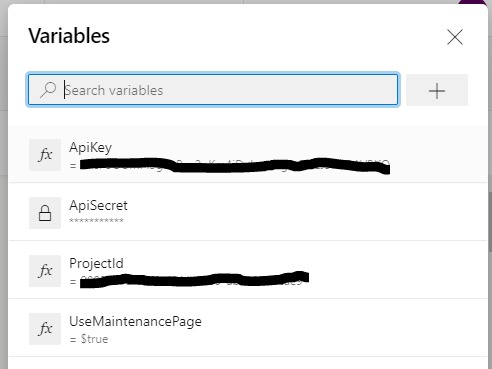

Pipeline variables

The following variables for the Deployment API credentials need to be added on the pipeline from the UI:

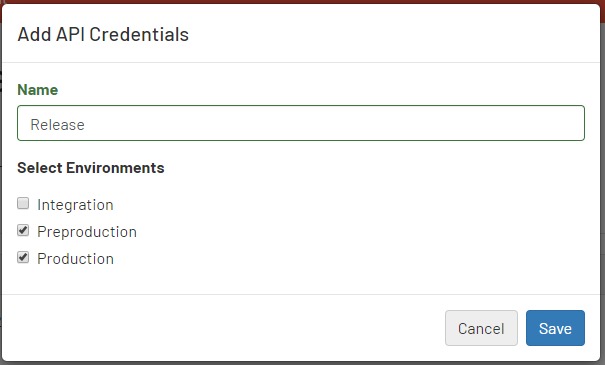

The ApiSecret variable should be created as a secret variable. Add the Deployment API credentials on the DXP Portal, you can select multiple environments for a credential. I have set up the credential to match the environments the pipeline will deploy to, this ensures that a credential is only used for its intended purpose.

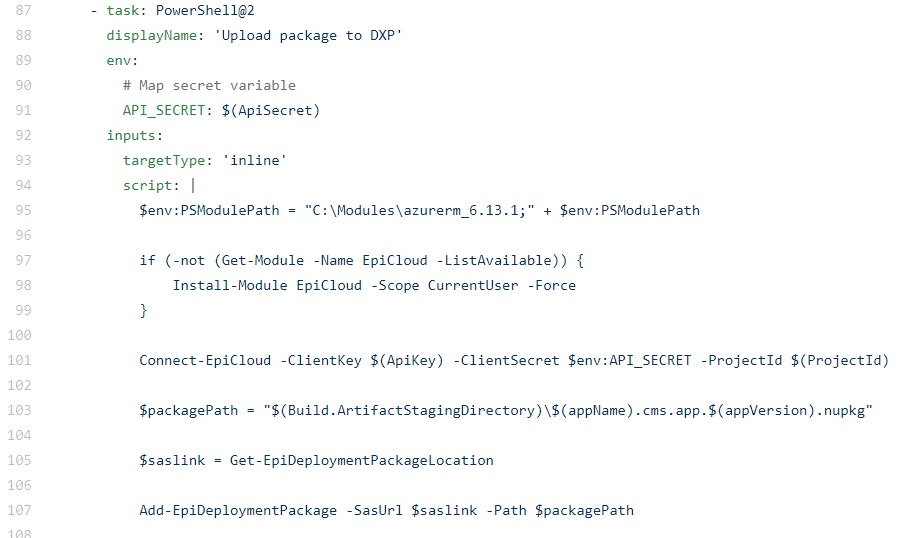

Upload Package

The following task uploads the code package to DXP ready for deployment. Note any secret variables e.g. ApiSecret need to be mapped to an environment variable to make it available for use in the Powershell script.

Preproduction deployment

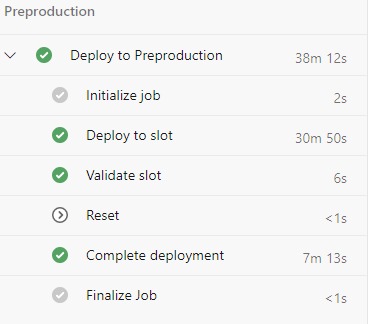

The Preproduction deployment stage has the following tasks.

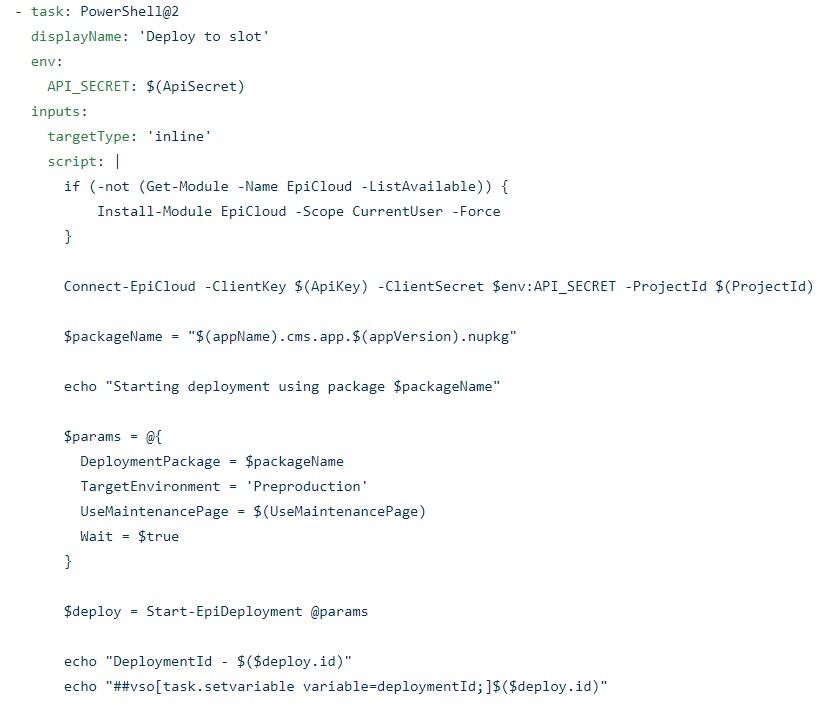

Deploy to slot

The first task deploys the uploaded code package to the Preproduction slot. It outputs a variable, deploymentId, that is used in the later tasks.

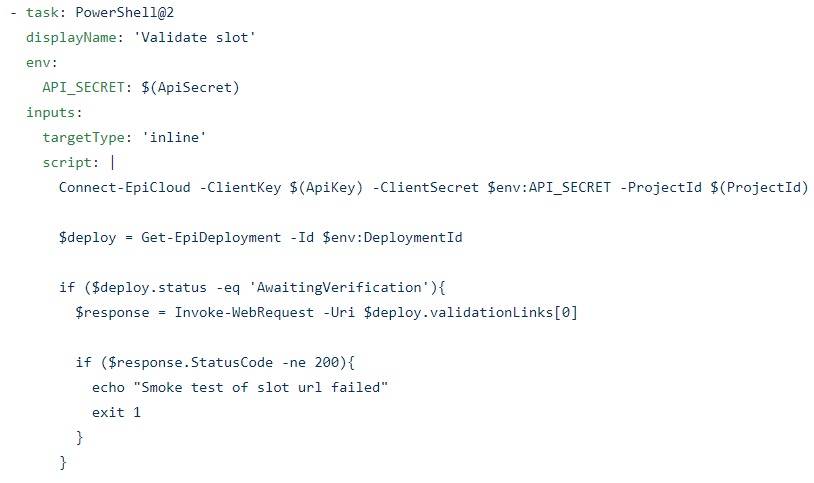

Validate slot

This task only performs a basic web request to the slot validation link.

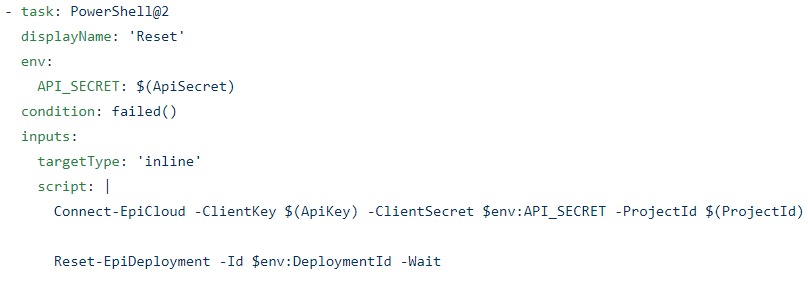

Reset

This task is set up to only run if the previous 'Validate slot' task fails

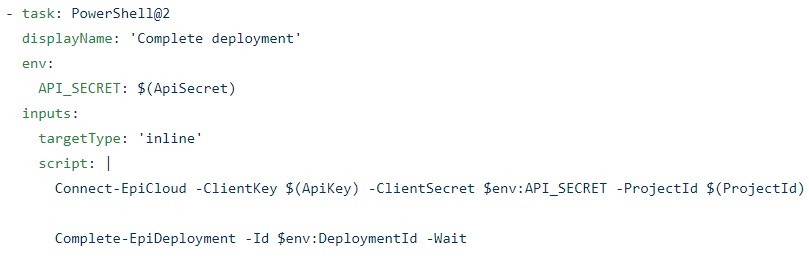

Complete deployment

And finally...

Production deployment

The Production stage is the same as the Preproduction stage. One thing to call out is that YAML currently doesn't support Manual Intervention task which will allow to pause an active pipeline and perform manual validation of the slot before completing the deployment stage.

Hotfixes

When a hotfix is merged/cherry-picked to the release branch, the Release pipeline will be triggered and the hotfix can be deployed to Preproduction and Production.

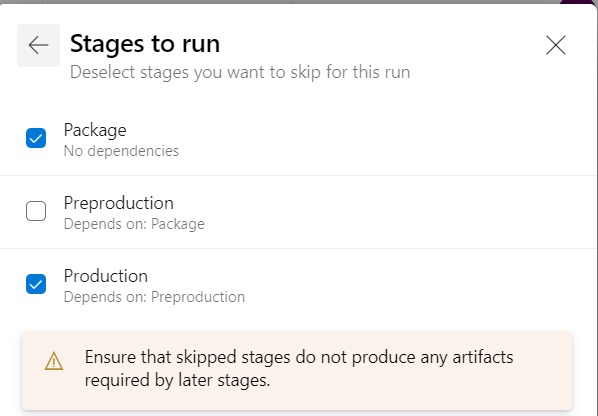

Deploy hotfix straight to Production

If there is a need to deploy an urgent fix straight to Production, the Release pipeline can be manually run to deploy to selected stages.

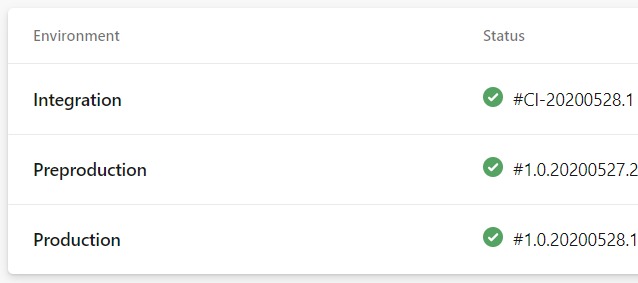

Environments

Environments in Azure Pipelines represent the environment(s) targetted by the pipeline. It provides deployment history for each environment. I have kept the environment names consistent with the DXP environments however they can be named to match the environment usage e.g. Test, UAT and Production.

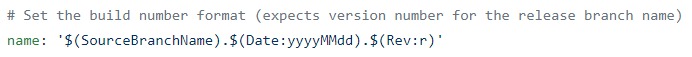

The version deployed to an environment comes from the pipeline build number. For release branches, I use the naming convention <major.minor> e.g. release/1.0 . This helps to identify the release branch that an environment is on for subsequent deployments e.g. bug fixes.

Approvals

Approvals and checks can be configured for each environment. This ensures any pipeline targeting the environment will require manual approval before running the stage.

Limitations

The main limitations with multi-stage YAML pipelines that I have encountered are

- Currently, no support for Manual Intervention task within a stage. This feature is on the Azure Pipelines roadmap.

- Stages cannot be skipped for an automatically triggered pipeline. Currently, to skip a stage, the pipeline has to be manually run.

By no means, these limitations outweigh the benefits.

Wrap up

Hopefully, this post goes some way in providing guidance to fully integrate your DXP deployments in Azure DevOps using multi-stage YAML pipelines and Deployment API.

For the latest YAML files and documentation, please refer to the GitHub repository https://github.com/rrangaiya/epi-dxp-devops

References

https://devblogs.microsoft.com/devops/whats-new-with-azure-pipelines/

Comments